Polarising’s Mulesoft CoE team has been exploring Mulesoft technology almost a year from now. One of our first findings is related to the importance of logs in the analysis and tracking of requests, and this article is dedicated to this subject and to understand where the transactions are.

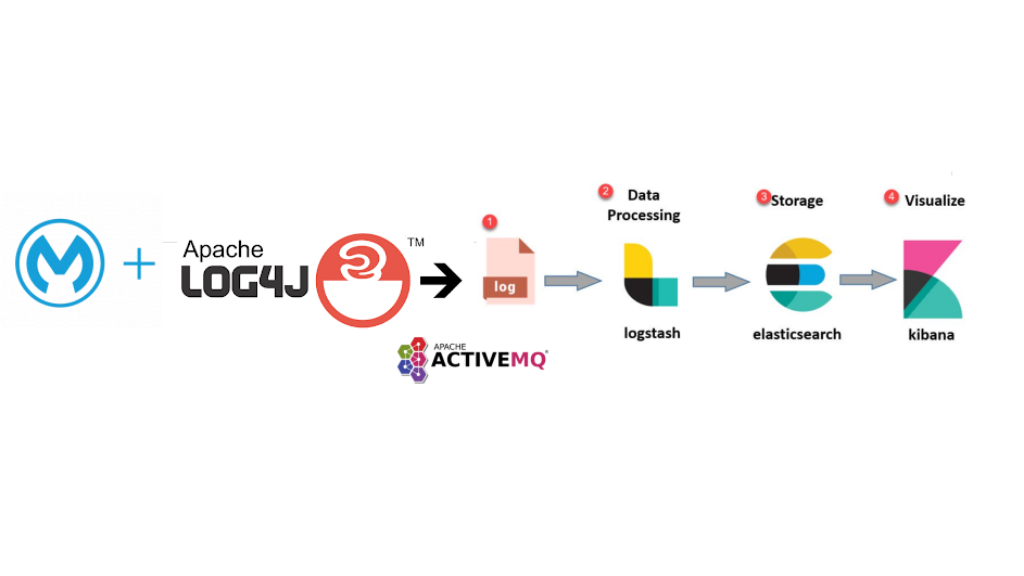

As a log utility we discovered that Apache Log4j2 Mulesoft plugin provides a set of features to facilitate the publication of messages to the queueing system used, Apache ActiveMQ. That information will then be the source data for analytical purpose and that will feed external tools like ELK Stack (ElasticSearch, Logstash and Kibana).

Image: Solution architecture for the case study with Log4J2.

Log4j2 default in Mulesoft

Log4j2 in Mulesoft is generic, meaning, for those who’ve worked with logging technology you will see the same configuration patterns. By default, logs in Mulesoft are written to a file on the application server, but these artifacts can be sent to other destinations such as Database, SMTP or even JMS Queue.

This last was the one chosen to perform the proof of concept. Challenging, don’t you think?

In the world of technology, it is said: “Come and do what has not yet been done.” And in this case, it was no different. We had several difficulties, but always with a clear goal of the final solution.

To this implementation, we have considered the following features of JMS in Log4j2:

- Added configuration for the adapter.

- Dynamic configuration in Apache ActiveMQ server parameters.

- Standardization of log content in JSON for Logger.

We’ve realized that it’s impossible to create dynamic variables, therefore it’s impossible to change its value during execution. This was a major setback, since one of the goals was to be able to manipulate the logs data to enrich it with additional status information.

After configuring the queue system, the next step was to visualize the information in the logs through the ELK Stack tool. Some dashboards were built to provide some graphical visualization of the data to upload.

The main objective was achieved, building an information flow from Mulesoft with Log4j2 to the ELK Stack integrated in a queue system.

JSON Logger

Image: Solution architecture for the case study with JSON Logger.

Like the name suggests, JSON logger prints the logs in JSON format, which can help a lot in any external log analytics tool like ELK Stack, Splunk or Datadog. The more we got familiar with the tool the more surprised we were, because its configuration was much more user-friendly than a configuration in Log4j2, and also because it wasn’t lacking on required details.

As we continued to explore the potential of this tool, we came across the following conclusions:

- Ease of configuration.

- Inclusion of status points for validation, such as: Start, After Request, End, etc.

- Ability to record execution time throughout the process.

- Added functions to ensure parse protection for JSON.

- Dataweave functions will give additional capabilities on log message formatting.

- Data masking, giving the capability to obfuscate sensitive content.

Moral of the story: what started as a simple exploration ended up being the discovery of a new tool that has great capabilities, and for sure it will be used in future projects.

Finally, there’s still room for some advice: those of you who are new to proofs of concept, please investigate! Prove the success or failure of theoretical concepts first. And if so, maybe with luck you will discover some treasures that can make a big impact on your daily work.

It was an excellent challenge to our Mulesoft Lead Practice team!

António Ferreiro, Mulesoft Lead Practice

Nelson Luz, Integration Engineer

Ricardo Ferreira, Integration Consultant

Software Engineer